Healthcare AI Hit 75% Adoption. Only 18% Is Governed.

- Apr 18

- 6 min read

Two 2026 industry reports reveal the defining gap in healthcare AI — and what it means for the next eighteen months of adoption, compliance, and risk.

Somewhere between a pilot and a press release, an uncomfortable truth has crept into healthcare: AI is no longer an experiment. It is infrastructure. And most organizations deploying it have not yet built the guardrails that infrastructure requires.

Two studies published in early 2026 — Eliciting Insights' Health System Adoption of AI Solutions and the HFMA / Eliciting Insights Health System Readiness for Artificial Intelligence report — arrive at the same conclusion from different angles. One catalogues the remarkable pace at which AI is being embedded into clinical, financial, and revenue cycle operations. The other quietly notes that the majority of the organizations doing this have no governance structure, no data policy, and no staff with the skills to evaluate what they've bought.

As Fierce Healthcare covered last month, the takeaway is unambiguous: healthcare AI has moved beyond pilots.

"In 2026, health systems are embracing AI to address both workforce constraints and financial pressures. Organizations have moved beyond pilots and are now strategically deploying solutions that directly impact provider burnout and the bottom line." - Trish Rivard, CEO, Eliciting Insights

At ALIGNMT AI, we've spent the last two years building for exactly this moment. Here's what the reports actually say, and what health systems need to do about it before the next regulatory cycle.

The adoption wave

The headline number is 75%. Three in four health systems have either implemented or are planning to implement at least one AI solution — a 27% jump in a single year. Broaden the lens from "solutions" to "any AI" and the figure climbs to 88%, according to HFMA's survey of 233 health systems. Fewer than one in twenty is on the sidelines.

The shape of the growth matters. Multi-solution adoption — systems running three or more AI tools — jumped from 30% to 59% in a single year. That is a 67% YoY increase and a structural shift. Single-solution experimentation is being replaced by portfolio deployment.

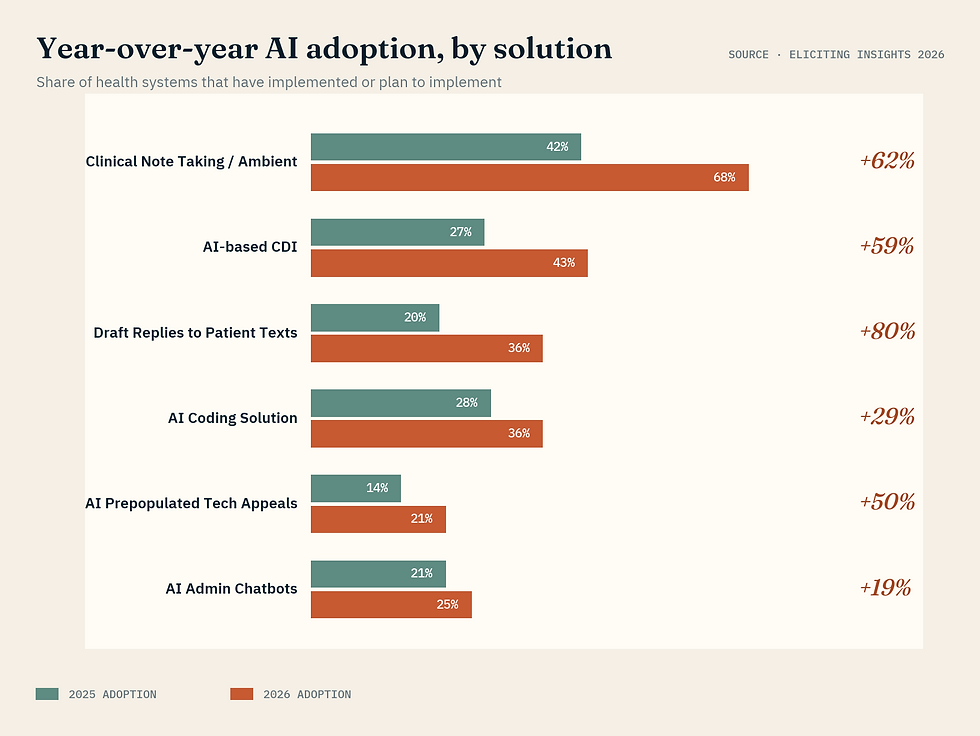

The category leaders:

• Clinical note-taking and ambient listening: 68% adoption, up 62% YoY

• Draft replies to patient texts: up 80% YoY

• AI-based clinical documentation improvement: up 59%

• Prepopulated technical appeals: up 50%

For every solution category, more health systems are evaluating than currently running. The "considering" pipeline is larger than the installed base. This is not a market being politely introduced to AI. It is a market in the middle of adoption — and the vendors best positioned to win the next eighteen months are the ones who can help health systems scale these deployments responsibly, not just quickly.

The readiness gap — and why it matters now

Plot AI strategy on one axis and governance structure on the other, and a sobering picture emerges. Only 18% of health systems land in the "mature" quadrant, meaning they have both a documented AI strategy and a governance group with teeth. Another 38% have strategy without governance. A full 42% have neither.

The resourcing story is even starker. 83% of health systems lack the resources to identify, select, and implement AI solutions. Among systems that have already invested in AI, more than a third of even the mature programs say they don't have enough implementation muscle. Among the non-mature majority, over half say the same.

What fills the vacuum? Vendors. Seventy percent of health systems without a mature AI program admit they rely on vendors to identify AI opportunities for them. That is a striking admission — and a warning. When the party selling the solution is also the party defining the problem, governance stops being a nice-to-have. It becomes the difference between a controlled rollout and a compliance incident waiting for a headline.

Three numbers explain the governance gap, all from systems that have already invested in AI:

• 35% have no formal AI data policy

• 47% cite data sharing as a top barrier — the absence of policy has become a brake on adoption itself

• 70% of non-mature programs rely on vendors to define where to use AI

This is the operating reality our platform was built to address. Governance shouldn't live in a three-ring binder. It should live in your production stack — alongside the models themselves.

The ROI is real — and so is the exposure

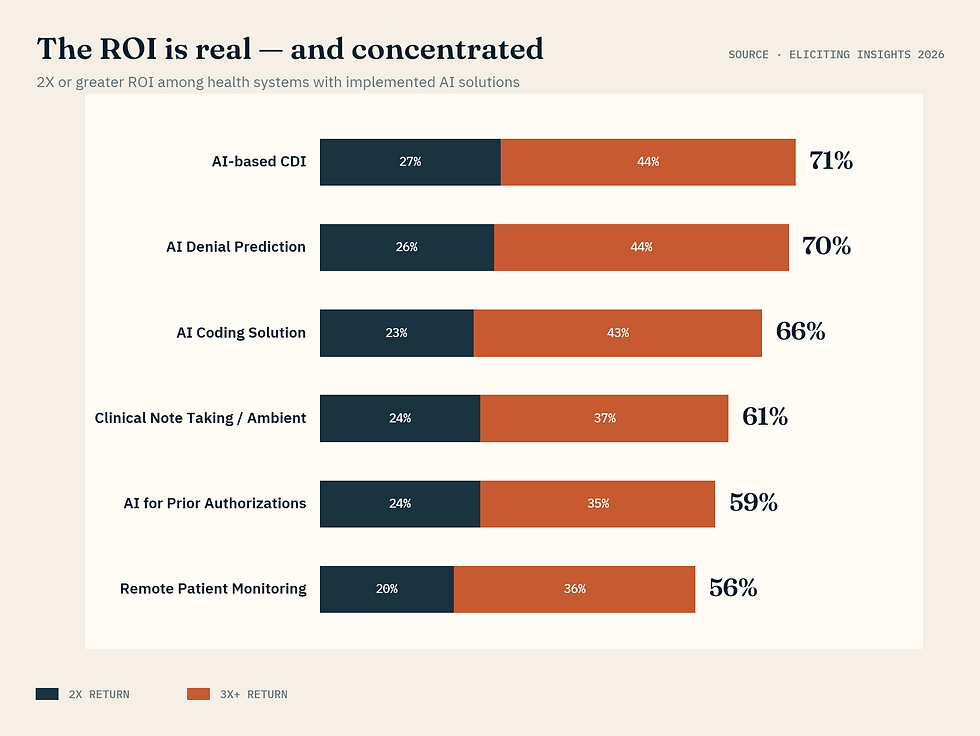

It would be easy to mistake this story for a cautionary tale. It isn't. Health systems that have implemented AI are, by and large, getting their money back — and often far more. Of respondents able to measure ROI, more than half report at least 2x returns on most categories.

The highest-performing categories:

• AI-based CDI: 71% of implementers report 2x+ returns, with 44% reporting 3x+

• AI denial prediction: 70% report 2x+

• AI coding: 66% report 2x+

• Clinical note-taking / ambient: 61% report 2x+

Here is the catch. Every one of those ROI figures is calculated before the regulatory math shows up. CHAI transparency standards, ONC HTI-1 requirements, state-level AI bills, and the DOJ's sharpened focus on algorithmic harm are all converging on 2026 and 2027. A single unmitigated model-drift event — a bias finding, a hallucination in clinical documentation, a denial-prediction model that quietly disadvantages a protected class — can eat multiple years of return in a single quarter.

CFOs seem to sense this, even if they can't yet name it. Sixty percent of health system CFOs call revenue cycle the single biggest AI opportunity — but only 39% believe AI will actually reduce overall costs. The gap between those two numbers is a risk premium. It is the cost of operating without a governance floor.

"Health systems are investing in AI solutions before they have the internal governance, data policies, or resources to responsibly operate them." - HFMA Health System Readiness for Artificial Intelligence, 2025

What the top 18% have figured out

Dig into the mature-program cohort and a pattern emerges. These systems don't treat AI governance as a compliance checkbox bolted on after procurement. They treat it as part of the AI stack itself — continuous, automated, and embedded in the production workflow.

Among mature programs:

• 72% delegate data policy to a dedicated AI governance group rather than leaving it to executive leadership or IT

• 80% vet vendors through a structured, repeatable process (versus 64% of non-mature programs)

• 68% have AI deployed across all three major functional areas — clinical, financial, and RCM — rather than in a single silo

• When sharing patient data with a vendor, they require security credentials, third-party validation, and documented performance guarantees — not a handshake

This is the operating model health systems in the 82% majority now need to adopt — fast. Rivard is right: the pilot era is over. But scaling AI without governance isn't scaling. It's stacking risk. And the pace of adoption leaves no room to build the governance layer the slow way.

Governance as infrastructure — how ALIGNMT AI closes the gap

ALIGNMT AI was built for exactly the moment these two reports describe. We are an AI governance and risk monitoring platform purpose-built for healthcare — the infrastructure layer that lets health systems, payers, and health IT enterprises scale AI without turning compliance into a bottleneck or a liability.

Our platform does what manual compliance programs cannot:

• CONTINUOUS RISK MONITORING. We watch AI systems in production, flagging bias, model drift, judgment errors, and adversarial threats in real time — without exposing sensitive patient data.

• AUTOMATED AUDIT TRAILS. Documentation for CHAI, ONC HTI-1, DOJ algorithmic fairness standards, and the EU AI Act is generated as a byproduct of how the platform works, not as a year-end scramble.

• UNIFIED OVERSIGHT. CIOs, CFOs, and AI governance groups get a single pane of glass across every AI tool deployed across the enterprise.

The results, for health systems already running ALIGNMT AI in production:

• Up to 50% reduction in AI compliance audit preparation time

• Real-time risk detection that prevents the kind of bias, documentation, or billing errors that trigger penalties

• Seamless integration with existing enterprise AI workflows — governance that layers onto production, not around it

The vendors and operators who win the next eighteen months of healthcare AI will not be the ones with the flashiest model. They will be the ones who can prove their AI is safe, fair, and compliant — on demand, with receipts. That's the product we ship.

The pilot era is over. Scale with confidence.

If you're part of the 82% still building your AI governance layer — or the 18% that has one but needs it to keep up with the regulatory curve — we should talk before the next audit cycle.

Sources:

• Eliciting Insights, "Health System Adoption of AI Solutions," February 2026 (n=120)

• HFMA / Eliciting Insights, "Health System Readiness for Artificial Intelligence," 2025 (n=233)

• Additional reporting via Fierce Healthcare, March 2026

Comments